Insight

If you work in pharma, you’re probably using your data all wrong. I know I did.

Insight

If you work in pharma, you’re probably using your data all wrong. I know I did.

The first time I proposed using AI to analyse electronic health record data to stratify patients for risk of rare diseases, a few eyebrows were raised.

The first question was “Isn’t that just RWE?”. As far as I saw it, of course RWE (real-world evidence) algorithms already existed, but the AI element and the final use of the data were not aligned correctly to deliver real-world impacts on patient outcomes.

RWE reports were often used for market intelligence at a corporate level, for siloed R&D projects or for clinical trial recruitment, and on some rare occasions to stratify patients by risk. But they weren’t being used to directly influence an HCP’s next course of action (in this case, testing for a rare disease) based on patient-specific insights.

At the time, I wasn’t sure if the concept was feasible, but I was confident that the technology already existed. So logically, the question was whether I could just find the right team to bring it all together?

A year on, and after more failures than successes, my small test project had proven its worth and had secured over $40 million in research funding, spanning not just rare-disease diagnosis, but also to stratify life-threatening adverse events like cytokine release syndrome in CAR-T patients, which at the time was incredibly new, with little data to analyse. Jump forward another four years to June 2023, and the AI revolution has truly arrived in healthcare, with leading EHR companies like EPIC beginning to integrate GPT-4 into their systems.

But even with these major advances in technology, data is still not quite being used to its full potential.

Data is the key to (re)building trust between pharma and healthcare providers

From the outset, it’s critical to understand what data represents across all stakeholders. After all, the reason AI technologies were not deployed in EHRs earlier was not because it couldn’t be done; it was because the data itself was only being viewed from a closed perspective among a siloed group of non-patient-focused analysts.

In healthcare, the exact same data holds different values and meaning (in terms of what it represents, or its purpose) to different stakeholders. To HCPs, data is the most important factor, bar none, in making clinical decisions. To a researcher or pharma company, data is a source for educational objectives to be developed, for marketing materials, for branding, or for regulatory/medical affairs.

But a subtle yet problematic disconnect persists between HCPs and pharma, and it boils down to trust.

Following the opioid crisis in the US, HCP trust in pharmaceutical marketing and commercial activities hit an all-time low, and it’s yet to really fully recover. As a result, pharma spend on independent / grant-funded medical education activities has (quite rightly) grown. But the less obvious issue remains around data and what it means to HCPs, not what the data might prove.

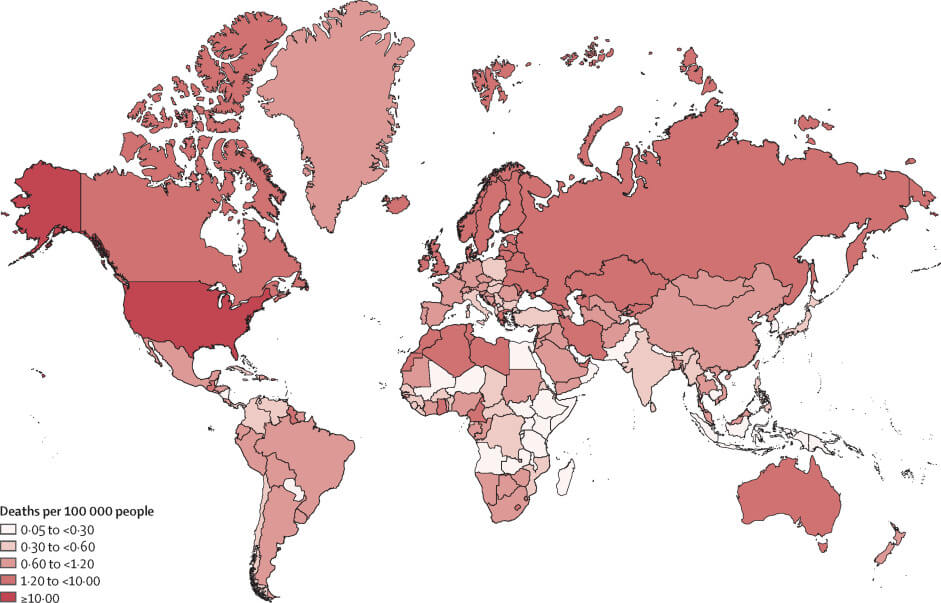

Visualising opioid overdose deaths per 100,000 people. Source: Big data and predictive modelling for the opioid crisis, published in The Lancet Digital Health.

Had Purdue published its data in full, the opioid crisis in all likelihood, would not have happened. Of course, this was proven to be outright criminal, as the data did not support the FDA insert label’s declaration that oxycontin could reduce opioid addiction. Now, HCPs are quite rightly demanding full access to the data to make the decisions themselves, using regulator approvals/recommendations as a prerequisite to conduct further due diligence so that they don’t walk into another Purdue-level disaster. And who can blame them?

On the flipside, you have the pharma companies that, despite the tightening of regulatory frameworks, will often bear the collective brunt of one bad actor or rogue profiteer’s transgressions.

But here’s the thing. The evidence was always there in the form of the raw data if anyone had demanded to see the research. So you could argue that the scientific process was not compromised; it was the processes designed to maintain the highest levels of transparency and integrity that were ultimately perverted. And HCPs understand this.

What pharma has so far failed to see is that their most valuable resource is the data itself. It’s just that they’re almost always using it in the wrong way. I’ve worked on many examples where this has been the case, and I’ve been guilty of it, too.

Visual data holds the key to better pharma-HCP alignment

Let’s start with some horizon scanning and consider the following factors:

- The vast majority of HCPs will make clinical decisions based on scientifically verifiable data.

- HCPs do not trust pharma-created content (mostly).

- HCPs trust data (almost always).

- Pharma has data (but not all).

- Pharma creates marketing materials, cherry-picking data (often from their own narrow internal sources) for educational objectives, or commissioning independent medical education activities.

- Pharma does not include comprehensive comparator data and only selects narrow educational objectives.

- HCPs aren’t detectives, and they resent having to search for trusted data across disparate sources.

- HCPs would rather not spend hours doing CME/IME courses – they only do so because they have to.

- Comprehensive comparator data, required for an HCP to make fully-informed clinical decisions (or to actually change practice), is very rarely included in today’s IME/CME modules (and even if it is, the format is didactic).

Given all of the above, let’s throw data visualisation into the mix:

- Interactive dataviz modules can provide a comprehensive view of an entire treatment landscape.

- The visualisations are based on raw data and should therefore be trusted more by HCPs.

- If needed, KOLs can still quickly signpost HCPs to salient points in the data (improved efficacy, reduced AEs, etc.), while still allowing the data to do the talking.

- HCPs can make quick clinical decisions in minutes based on scientific evidence, instead of hours or even days.

- Dataviz resources can be easily updated and time-stamped for additional trust-building and for future quick reference.

- Data visualisation does not cherry-pick or silo educational objectives – aligned with gCMEp, it is not only balanced, fair and objective, it is also the primary source.

- HCPs can explore and jump to areas of interest – for example, comparing drug-vs-drug efficacy across demographics, adverse events, contraindications, or even disease awareness (burden and regional support networks), all without having to sit through an IME/CME module or wading through multiple websites, published papers, and hours of videos.

- Using AI and EHR data, data visualisations can support real-time, real-world evidence for best practice, including improved diagnosis, with verifiable context to expedite further change in practice.

All sounds great, right?

Well, some important considerations remain. Can we be sure that HCPs trust data visualisation? Surprisingly, there isn’t actually much data on this specifically, and we fully intend to commission an independent study on this.

But what we do know from a number of reports is that pharmaceutical companies are not providing HCPs with what they need to make rapid, fully-informed clinical decisions based on data.

It is possible that HCPs might need additional support or explanations to feel more confident in using dataviz as a go-to trusted source, but once this hypothetical barrier is overcome (which it will be), pharma could deliver exactly what HCPs have been asking for all this time. In other words, stop trying to convince HCPs they can trust you, when they only care about one thing: trusting your data.

What HCPs really want

Data visualisation is not new. Pie charts and infographics are data visualisations, after all. And pharma will no doubt continue to pay agencies millions to create marketing messaging and aesthetic visuals based on educational objectives taken from the data. But this is where infogr8 differs from the agency model.

We match up medical subject matter experts with our trusted network of more than 100 of the world’s leading dataviz professionals. That way, we never compromise on our shared mission to allow the data to tell its own stories, treating it with the respect that it deserves. And that, after all, is what HCPs really want.

If you’re an HCP, a pharma representative, or anyone else for that matter, and you’d like to send me any thoughts on the above (whether you agree or not), you can reach out to me by email.